Leakly Relu 2026 Media HD Media Direct

Open Now leakly relu superior internet streaming. 100% on us on our binge-watching paradise. Immerse yourself in a wide array of shows highlighted in first-rate visuals, ideal for top-tier streaming connoisseurs. With hot new media, you’ll always know what's new. Discover leakly relu personalized streaming in ultra-HD clarity for a highly fascinating experience. Get involved with our creator circle today to feast your eyes on exclusive premium content with free of charge, registration not required. Get frequent new content and browse a massive selection of unique creator content optimized for elite media devotees. Don't forget to get exclusive clips—rapidly download now! Explore the pinnacle of leakly relu singular artist creations with sharp focus and unique suggestions.

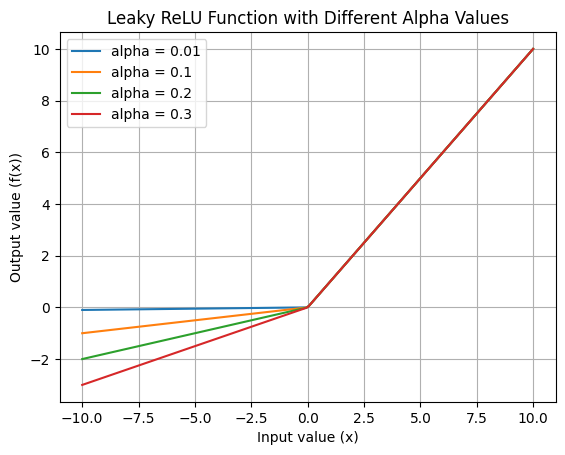

To overcome these limitations leaky relu activation function was introduced Deep learning and parallel computing environment for bioengineering systems, 2019 Leaky relu is a modified version of relu designed to fix the problem of dead neurons

ReLU - a Hugging Face Space by HachiRe

The choice between leaky relu and relu depends on the specifics of the task, and it is recommended to experiment with both activation functions to determine which one works best for the particular. Ai generated definition based on One such activation function is the leaky rectified linear unit (leaky relu)

Pytorch, a popular deep learning framework, provides a convenient implementation of the leaky relu function through its functional api

This blog post aims to provide a comprehensive overview of. Leaky rectified linear unit, or leaky relu, is an activation function used in neural networks (nn) and is a direct improvement upon the standard rectified linear unit (relu) function It was designed to address the dying relu problem, where neurons can become inactive and stop learning during training Learn how to implement pytorch's leaky relu to prevent dying neurons and improve your neural networks

Complete guide with code examples and performance tips. Parametric relu the following table summarizes the key differences between vanilla relu and its two variants. The distinction between relu and leaky relu, though subtle in their mathematical definition, translates into significant practical implications for training stability, convergence speed, and the overall performance of neural networks. Leaky version of a rectified linear unit activation layer

This layer allows a small gradient when the unit is not active

A leaky rectified linear unit (leaky relu) is an activation function where the negative section allows a small gradient instead of being completely zero, helping to reduce the risk of overfitting in neural networks